AI Workforce - Multi-agent AI platform where agents collaborate to solve complex problems

Built as agentic AI emerged, AI Workforce enables multiple AI agents to interact with each other, tackling problems from different perspectives—development, testing, compliance—like a real team. Proven in production through extensive use in Qandai development.

- Project

- AI Workforce

- Year

- Service

- AI Architecture, Product Development

Overview

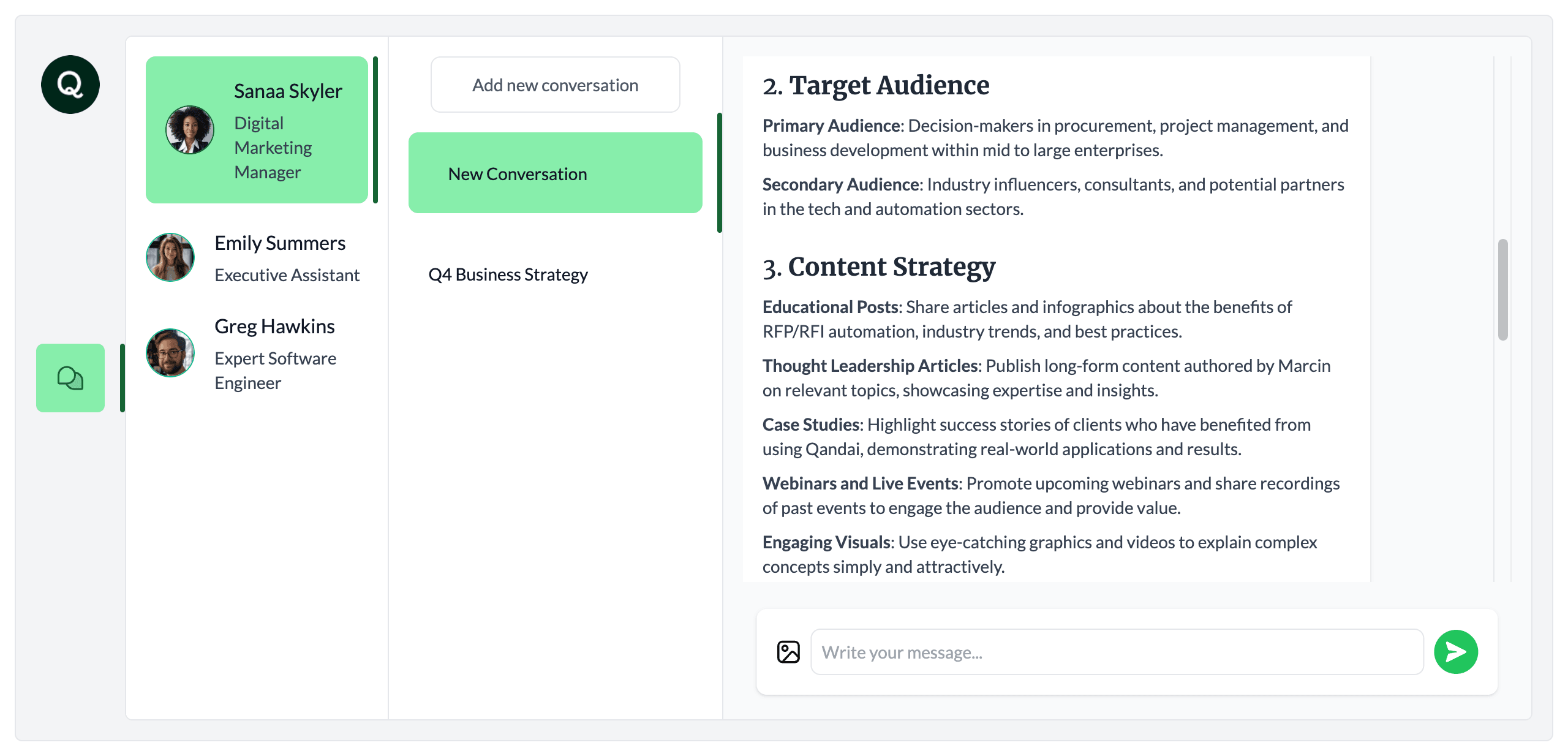

AI Workforce was born from watching agentic AI emerge as a paradigm shift in how we work with AI. Instead of single-purpose chatbots, what if we could build teams of specialized AI agents that collaborate with each other—one developing functionality, another testing it, another ensuring compliance—approaching problems from multiple perspectives like human teams do?

The platform became my laboratory for exploring multi-agent coordination and was battle-tested in production: I used AI Workforce extensively during the latter stages of Qandai's development, where AI agents handled real feature development, testing, and quality assurance workflows.

Built as a native Mac desktop application using Electron, AI Workforce pioneered a skills-based system where agents possess specific capabilities (like data visualization through a charting skill) that serve as both functional building blocks and potential monetization layers.

The Vision

The promise of agentic AI isn't just smarter chatbots—it's AI agents that can work together, each bringing specialized expertise to solve complex problems. Imagine developing a feature where:

- A Developer Agent writes initial implementation

- A Test Agent creates test cases and validates functionality

- A Compliance Agent reviews against regulatory requirements

- A Documentation Agent captures decisions and creates user guides

Each agent sees the problem through their specialized lens, catching issues and adding value the others wouldn't. This multi-perspective approach mirrors how the best human teams operate.

My Role

I built AI Workforce from concept through production deployment, architecting the agent coordination system, implementing the skills framework, and validating the platform through real-world use in Qandai's development process.

Multi-Agent Coordination Architecture

Challenge

Build a system where multiple AI agents can interact with each other, maintain conversation context, coordinate handoffs, and tackle problems collaboratively—not just respond to human prompts in isolation.

Contribution

- Designed agent-to-agent communication protocols allowing agents to directly engage each other with questions, share context, and coordinate workflows

- Implemented conversation threading that maintains context across multi-agent interactions while preventing context collapse

- Created agent specialization system where each agent has a defined role (developer, tester, compliance reviewer) with role-specific prompts and knowledge

- Built orchestration layer managing agent interactions, determining when to escalate between agents, and preventing infinite loops or circular conversations

- Developed shared memory systems allowing agents to access common knowledge while maintaining agent-specific context

Outcome

A functioning multi-agent system where AI agents genuinely collaborate. In Qandai development sessions, I'd watch Developer and Test agents have back-and-forth conversations about implementation approaches, with Compliance Agent interjecting when regulatory concerns arose—mimicking how real teams work.

Skills-Based Agent System

Challenge

Create extensible agent capabilities that could be mixed and matched, serve as monetization units, and enable agents to perform actions beyond text generation—particularly data visualization.

Contribution

- Architected a skills system where agents possess specific capabilities that extend beyond language generation

- Developed the Charting Skill as proof-of-concept, enabling agents to create graphs, charts, and data visualizations dynamically based on conversation context

- Designed skills to be composable—agents could combine multiple skills to accomplish complex tasks

- Built skill invocation system where agents recognize when to apply specific skills based on task requirements

- Created skill marketplace concept as future monetization layer—users pay for premium skills, agents unlock new capabilities

Outcome

Agents that could not only discuss data but visualize it. The charting skill proved especially valuable in Qandai development for analyzing questionnaire response patterns and performance metrics. The skills architecture validated the monetization hypothesis: users value specific agent capabilities, not just generic intelligence.

Production Use in Qandai Development

Challenge

Move beyond theoretical multi-agent systems to real-world application—using AI Workforce to actually build features for Qandai during its later development phases.

Contribution

- Integrated AI Workforce into daily Qandai development workflow

- Used multi-agent teams to develop new features: Developer Agent wrote code, Test Agent created test cases, Compliance Agent reviewed against regulatory requirements

- Validated that agent-to-agent conversation caught edge cases and compliance issues human review might miss

- Gathered qualitative data on where multi-agent approaches added value versus adding overhead

- Refined agent coordination based on real-world friction points discovered during production use

Outcome

Real-world validation that multi-agent systems can contribute meaningfully to software development. The multi-perspective approach caught issues (particularly around compliance and edge cases) that single-agent or human-only approaches missed. However, it also revealed coordination overhead—not every task benefits from multiple agents.

Desktop Application Development

Challenge

Deliver AI Workforce as a native Mac experience that feels like an installed application, not a web page in a browser.

Contribution

- Packaged web application using Electron for native Mac desktop distribution

- Designed application to run locally with cloud AI model access—balancing performance and capability

- Created Mac-native experience with menu bar integration, keyboard shortcuts, and system notifications

- Optimized for offline-first operation where possible, with graceful degradation when AI services unavailable

Outcome

A native Mac application that felt like professional desktop software, not a wrapped web app. Users could launch AI Workforce from their dock and work in a dedicated environment optimized for extended multi-agent sessions.

Impact & Results

- Used in Qandai development

- Production

- Skills system validated

- Charting

- Coordination architecture

- Multi-agent

Platform Achievements

- Built fully functional multi-agent system with agent-to-agent communication

- Developed skills-based architecture with charting capability as proof-of-concept

- Deployed as native Mac desktop application using Electron

- Validated through real-world use in Qandai's later development phases

Technical Validation

- Proved multi-agent coordination could catch issues (edge cases, compliance concerns) that single-agent systems miss

- Demonstrated skills-based architecture enables monetization beyond base intelligence

- Validated that agentic AI adds measurable value in software development workflows when properly orchestrated

Strategic Learning

- Gained first-hand experience with agentic AI coordination challenges and opportunities

- Understood which problems benefit from multi-perspective agent approaches versus single-agent efficiency

- Built practical knowledge about agent prompt engineering, context management, and coordination protocols

Key Learnings

Multi-Agent Coordination is Non-Trivial

Getting AI agents to genuinely collaborate requires careful orchestration. Without guardrails, agents can fall into loops, lose context, or defer endlessly. The key insight: agents need clear roles, explicit handoff protocols, and well-defined responsibilities—just like human teams. The best multi-agent systems mirror good organizational design principles.

Not Every Problem Needs Multiple Agents

Production use in Qandai revealed an important nuance: multi-agent approaches excel at catching edge cases, ensuring compliance, and providing multiple perspectives—but they add latency and token cost. Simple, well-defined tasks are often better served by single specialized agents. Multi-agent systems shine for complex, high-stakes decisions requiring diverse viewpoints.

Skills as Monetization Units Work

The charting skill validated that users value specific agent capabilities beyond generic intelligence. This suggests a path for agentic AI monetization: free base agents with premium skills (advanced analysis, specialized integrations, domain expertise). Users pay for capabilities, not conversations.

Agent Specialization Requires Careful Prompt Engineering

Creating agents with distinct personalities and expertise areas through prompts alone is challenging. Developer Agent and Test Agent would sometimes drift toward similar thinking patterns. Successful specialization required continuous prompt refinement, role-specific knowledge bases, and explicit instructions to maintain perspective. Agentic AI prompt engineering is its own discipline.

Desktop Distribution Still Matters

Packaging as native Mac app using Electron created perceived value beyond the web version. Users treated it as "professional software" rather than "another AI chatbot." Desktop distribution signals commitment and permanence. For certain audiences, native apps carry credibility web apps don't.